There are lots of methods for getting this working spread over the internet. Some work, but are a mess (and involve faking monitor connections to the nVidia GPUs using some Xorg trickery) or just flat out don’t work.

This method works great, provided you don’t want fan control over the GPUs. So just get some decent case fans and you’re golden.

I used the nVidia Ubuntu PPA for drivers; you can use the binary blob .run file, and frankly it’ll probably save you headaches in the long run (like, for example, when the drivers update and freakin’ break everything! /rant)

First, I got a more up-to-date kernel on Ubuntu 16.04…

apt-get install linux-headers-4.10 linux-headers-4.10-generic linux-image-4.10-generic linux-image-extras-4.10-generic

You should have dkms installed, but if not, pull it in with:

apt-get install dkms

reboot

Add nVidia drivers PPA:

add-apt-repository ppa:graphics-drivers/ppa

apt-get update

apt-get install nvidia-375

apt-get install nvidia-settings nvidia-prime

reboot

at this point, the graphics may work, or they may not. If they do, great. if they don:t, well, it:s easy to fix by reinstalling nvidia-settings and nvidia-prime.

Set the focus GPU to intel via the system tray nvidia settings app. log out, log back in again. 3d accel should still work.

Download the CUDA repo .deb (I used network, but local works too) and install using dpkg. there should not be any dependencies unmet or conflicting.

dpkg -i [cudarepofile.deb]

apt-get update

apt-get install cuda

This will pull in CUDA8.0. Again, like I said, you can always use the local .run file, which will save you updating headaches.

Now, at this point, CUDA wants to reinstall the gpu driver. I let it. It’ll break 3d acceleration, but reinstall nvidia-settings and nvidia-prime to fix it again.

At this point, nvidia-smi (the nvidia system management interface) will stop working. This is because it can’t cope with the idea of you not using the nVidia GPUs for Xorg. Dumb, given the drive nVidia has been putting into GPGPU, but nevertheless true.

To get it working again, do the following; you can either remove or rename the link in /usr/bin. I rename, others might remove:

mv /usr/bin/nvidia-smi /usr/bin/nvidia-smi.backup

then, place the following script in a file called “nvidia-smi”

vi /usr/bin/nvidia-smi

—

#!/bin/bash

LD_PRELOAD=/usr/lib/nvidia-375/libnvidia-ml.so /etc/alternatives/x86_64-linux-gnu_nvidia_smi "$@"

—

OK. Now, you need to change the /nvidia-375/ bit to whatever driver version you are using. If it’s 375, awesome. If it’s 378, you’ll get an error until you change it.

The “$@” bit is necessary as nvidia-smi for the correct parsing of the command; without it, it’ll throw a nasty error.

So, nvidia-smi works, but some things will still throw up wobblers. To fix this, add;

—

# CUDA stuff

export PATH=/usr/local/cuda/bin:$PATH

export LD_LIBRARY_PATH=/usr/local/cuda/lib:$LD_LIBRARY_PATH

# Fixing Intel/nVidia conflict (nVidia for compute, Intel for display)

export LD_LIBRARY_PATH=/usr/local/cuda/lib64:/usr/lib/nvidia-375:$LD_LIBRARY_PATH

—

To your .bashrc

To get persistence working (that is, power state management, which will allow the card to clock down when it’s not being used) edit the following:

vi /etc/rc.local

and add

/usr/bin/nvidia-smi -i 0,1 -pm ENABLED

above the “exit 0”

This tells nvidia-smi to select both GPUs and enable persistence mode so that GPUs actually freakin’ work properly. This has the side effect of making the nvidia-smi command instant again (it wasn’t when switching to Intel iGPU). Obviously, if you’ve only got a single GPU, you just tell it -i 0… if you’ve got three or four, you can add accordingly. The GPU count is always from 0.

Whenever you have a driver update occur, it’ll replace the nvidia-smi script with the actual program again. Let it. CUDA will break when you reboot (so long as drivers don’t update, it’ll survive any kernel updates without breaking) and it’s easy enough to remove the symlink in /usr/bin/nvidia-smi and replace with that little script again.

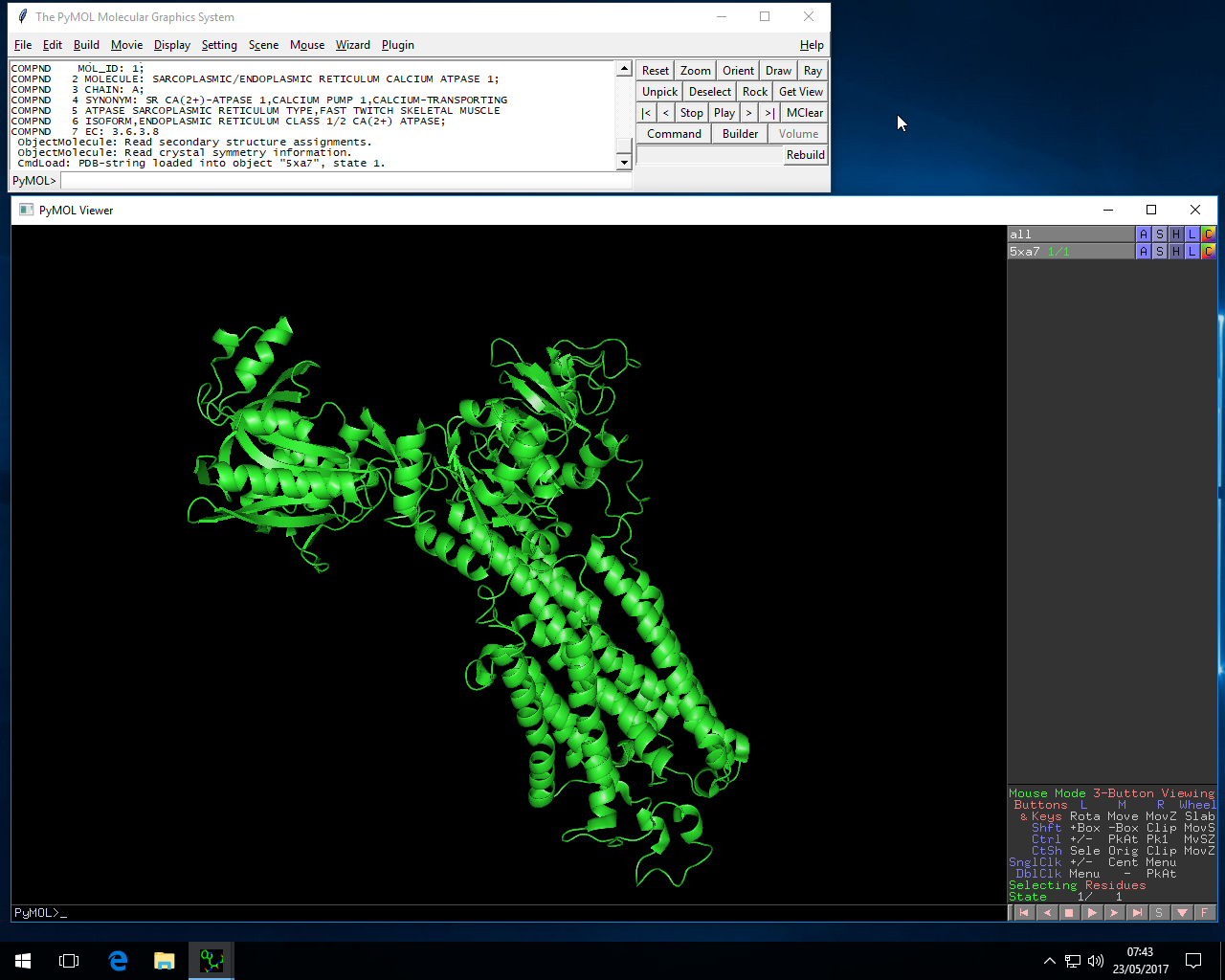

I’m using this to drive a 4K@60Hz monitor via the iGPU while using a pair of GTX1080’s for compute.

The only thing I can’t get working is fan control. I’d rather have my GPUs loud, than I would have them cooking. But I compensate with some fairly chunky intake fans pointing straight across the GPUs.

I’ve seen people (some fairly high-ranking Professors, as well) suggest watercooling. Well, yes, that is a possible solution. It’s just a shame that I’m a little shy about watercooling 24/7 systems that are largely unattended, because… I’ve had two watercooling pumps, of different types, die on me recently. I was fortunate that one system was idle when it happened, but it still reached “idle” at 66 degrees C. That was with full-copper (read: expensive) waterblocks, and CPU only. I don’t doubt that GPUs would be dead if that had happened to them.

So, yeah, I’m a little leery of watercooling systems for high compute loads unless backed by manufacturer warranty. Haha.